In the early 2020s, health equity surged to the forefront of the pharmaceutical industry’s agenda. The COVID-19 pandemic exposed deep and persistent disparities in outcomes, and companies responded with new commitments, initiatives, and investments aimed at closing those gaps.

Six years later, the environment has changed. Companies have scaled back public-facing equity commitments, rebranded initiatives, or shifted resources elsewhere. And yet the underlying need has only grown more urgent. The disparity in infant mortality between Black and white children has grown from 92% in the 1950s to 115% today[1]. Only 41% of Black adults and 34% of Hispanic adults now consider basic care affordable, down from 2021, while the rate for white adults has remained steady[2].

From our experience working with pharmaceutical companies, pharmaceutical leaders see the business and social value of advancing health equity. Companies are evolving from asking “why should we pursue this?” to “how can we pursue it in a way that is compliant, sustainable and aligned with business priorities in a rapidly changing environment?”

Industry leaders recognize that this moment calls not for a retreat of health equity efforts, but for an evolution.

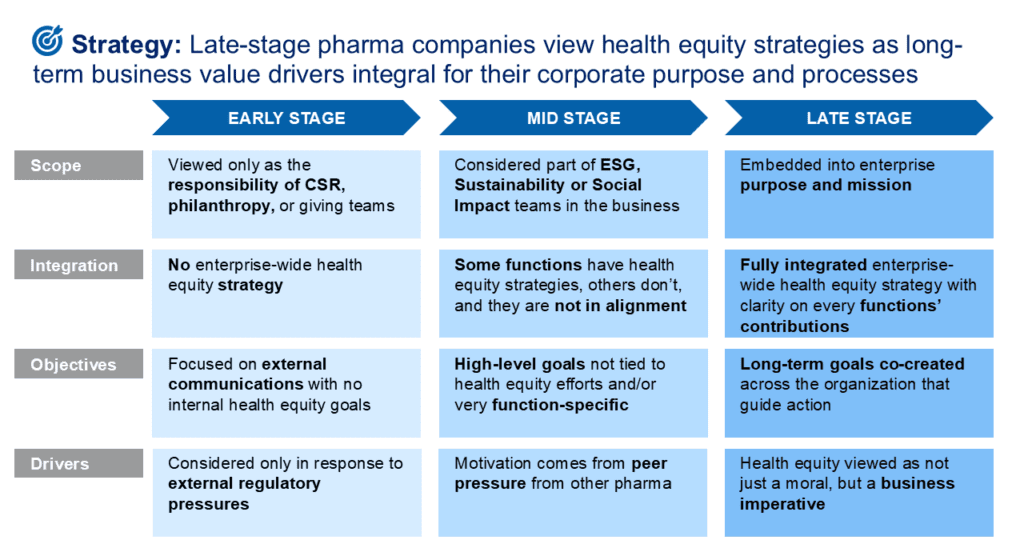

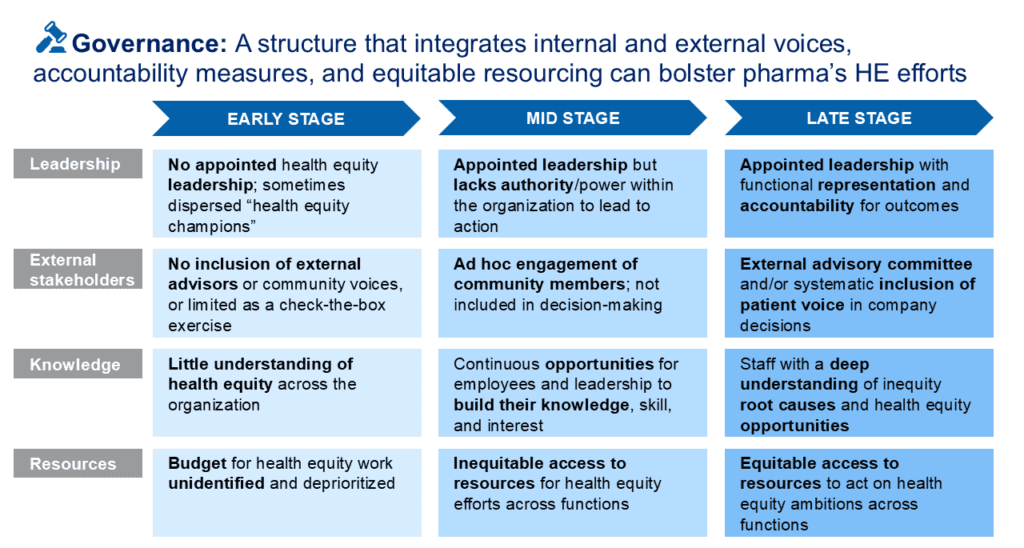

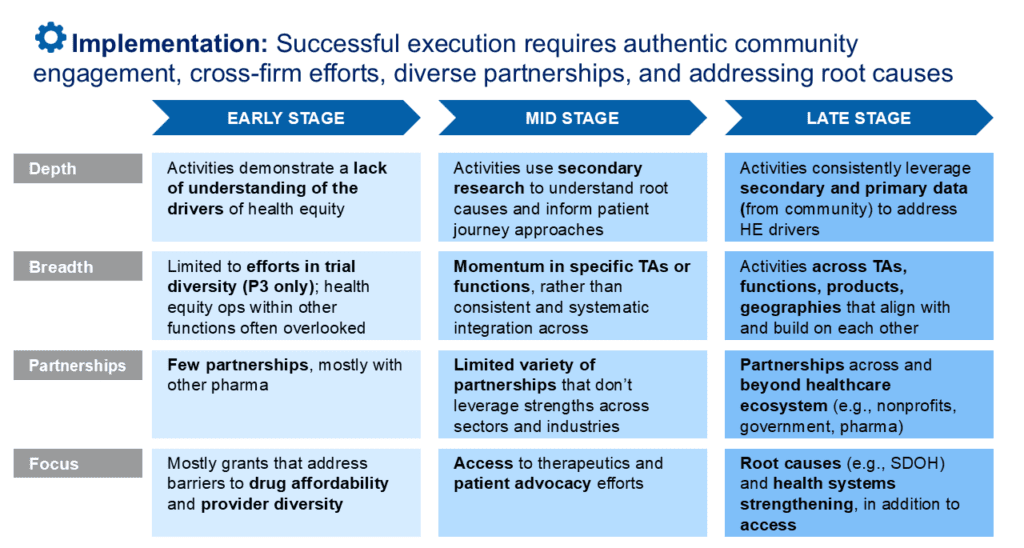

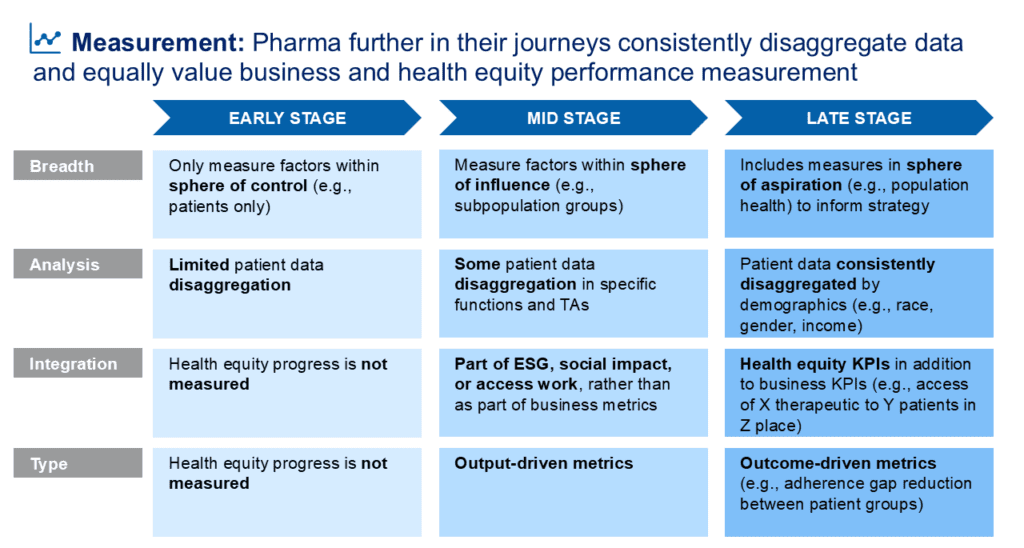

Health equity progress is rarely linear. Companies advance through distinct stages, and under current pressures, some are regressing. The maturity models below map where companies typically stand, and where they tend to stall or backslide, across four key dimensions: Strategy, Governance, Implementation, and Measurement.

For each dimension, companies tend to move from early-stage siloing and reactive responses toward late-stage integration and long-term commitment, though few reach late stage consistently across all four.

Even among industry leaders, late-stage activity tends to be limited and not span all four dimensions. While no U.S. company is consistently operating in the late stage, the patterns below explain why – including why some were late stage and backslid – and the opportunities point toward what it would take to get there.

Integrating Business and Health Equity

Pattern: Siloing health equity within foundations or ESG teams

The healthcare ecosystem at large has historically viewed efforts to eliminate health inequities as philanthropic opportunities. Pharma has often approached it as a moral obligation or reputational opportunity. Structurally, this has meant making health equity the sole responsibility of ESG, Sustainability, Social Impact units or their corporate foundation. This limits impact as initiatives cannot fully leverage enterprise capabilities or inform core strategy.

The healthcare ecosystem at large has historically viewed efforts to eliminate health inequities as philanthropic opportunities. Pharma has often approached it as a moral obligation or reputational opportunity. Structurally, this has meant making health equity the sole responsibility of ESG, Sustainability, Social Impact units or their corporate foundation. This limits impact as initiatives cannot fully leverage enterprise capabilities or inform core strategy.

In today’s environment, siloing also introduces risk. When health equity is treated as a standalone initiative rather than integral to business operation, it becomes more vulnerable to shifting political sentiment, leadership transitions and budget pressures. That means when times are tough, health equity work is the first to go. And companies risk forfeiting competitive advantage: capabilities such as community relationships, representative data infrastructure, and culturally fluent engagement models take years to build. Allowing them to atrophy leaves companies behind when regulatory, market, or investor expectations shift, or when patient populations further diversify and companies face losing market share.

Opportunity: Health equity integration into the core business, not only as a path towards greater impact, but also as a prerequisite for enduring politically charged times

In some organizations, previously siloed social impact or sustainability teams can act as conveners, bringing together leaders from R&D, medical affairs, market access, commercial, and supply chain to apply a consistent health equity lens across the product lifecycle. Where anti-kickback concerns require formal separation between philanthropic and commercial activity, companies can still align around shared principles, priorities, and high-level metrics.

In some organizations, previously siloed social impact or sustainability teams can act as conveners, bringing together leaders from R&D, medical affairs, market access, commercial, and supply chain to apply a consistent health equity lens across the product lifecycle. Where anti-kickback concerns require formal separation between philanthropic and commercial activity, companies can still align around shared principles, priorities, and high-level metrics.

For integration to be durable, companies may also need to be more intentional about framing. Grounding health equity efforts in business-relevant language (e.g., “ensuring representative evidence”, “closing care gaps”, “improving access”, “expanding responsible market reach”) ties them directly to R&D rigor, commercial excellence, and risk management. When equity efforts are measured against enterprise KPIs and embedded in operating rhythms, they are less vulnerable to political cycles and budget contraction.

Expanding Beyond Access Programs

Pattern: Favoring access programs as the silver bullet to advance health equity

When asked how they advance health equity, pharma leaders frequently point to patient assistance programs. These initiatives help uninsured and underinsured patients obtain medications and maintain adherence, and they matter. But access programs alone do not fully leverage the pharmaceutical industry’s influence within the healthcare ecosystem. They address cost at the point of treatment, but do little to tackle structural barriers affecting diagnosis, referral, enrollment, or navigation. As a result, companies may not see the improvements in population health they strive for.

When asked how they advance health equity, pharma leaders frequently point to patient assistance programs. These initiatives help uninsured and underinsured patients obtain medications and maintain adherence, and they matter. But access programs alone do not fully leverage the pharmaceutical industry’s influence within the healthcare ecosystem. They address cost at the point of treatment, but do little to tackle structural barriers affecting diagnosis, referral, enrollment, or navigation. As a result, companies may not see the improvements in population health they strive for.

In today’s environment, this narrow focus also introduces vulnerability. Policymakers and payers increasingly scrutinize access programs and without broader system alignment, they can be perceived as transactional rather than transformative.

Opportunity: Moving beyond access toward systems-level change that addresses root causes

As a central actor in the healthcare ecosystem, pharmaceutical companies are uniquely positioned to influence system-level conditions that shape patient outcomes. Advanced health equity strategies expand beyond access programs to include deliberate investments in healthcare system strengthening, building capacity, coordination, and effectiveness across multiple actors and addressing the structural and social determinants of health that influence outcomes before treatment even begins.

As a central actor in the healthcare ecosystem, pharmaceutical companies are uniquely positioned to influence system-level conditions that shape patient outcomes. Advanced health equity strategies expand beyond access programs to include deliberate investments in healthcare system strengthening, building capacity, coordination, and effectiveness across multiple actors and addressing the structural and social determinants of health that influence outcomes before treatment even begins.

In practice, this can include investments in building a stronger, more resilient healthcare workforce (e.g., increasing provider cultural competency, diversifying healthcare leadership), integrating health equity into supply chain planning (e.g., diversifying suppliers, investing in manufacturing in medically underserved areas), fostering equitable data practices (e.g., disaggregating all patent data, supplementing clinical indicators with indicators of patients’ lived experiences) and bridging gaps across the care continuum (e.g., actively collaborating with payers, providers, community health workers, community organizations).

These efforts align with long-term business interests: by strengthening the systems that enable patients to be healthy, pharmaceutical companies drive better patient outcomes and reinforce the foundations that allow their market to thrive.

Making a Long-Term Effort

Pattern: Expecting rapid change when health equity moves at a different pace

Corporations’ need to report quarterly performance creates pressure for quick ROI. This often leads to health equity initiatives being confined to more straightforward, easily measurable programs that promise “quick wins.” As a result, more innovative, high-risk efforts – those that could yield greater long-term impact – are often sidelined. When outcomes don’t meet expectations due to ambitious goals set with limited resources and time, pharma frequently cuts commitments short.

Corporations’ need to report quarterly performance creates pressure for quick ROI. This often leads to health equity initiatives being confined to more straightforward, easily measurable programs that promise “quick wins.” As a result, more innovative, high-risk efforts – those that could yield greater long-term impact – are often sidelined. When outcomes don’t meet expectations due to ambitious goals set with limited resources and time, pharma frequently cuts commitments short.

In a volatile external environment, this pressure is compounded by uncertainty. Leaders may hesitate to commit to long-horizon strategies and default to reversible or low-visibility initiatives. This dynamic limits the potential for transformational impact and reinforces fragmented approaches.

Opportunity: Normalizing health equity as a long-term transformational effort

Companies that make sustained progress invest in learning agendas with frequent reflection and strategy adaptation, as well as measurement frameworks that capture both near-term indicators and long-term system change. Metrics are not limited to external impact but also encompass internal change management processes, helping reinforce to leaders that they are on the right track.

Companies that make sustained progress invest in learning agendas with frequent reflection and strategy adaptation, as well as measurement frameworks that capture both near-term indicators and long-term system change. Metrics are not limited to external impact but also encompass internal change management processes, helping reinforce to leaders that they are on the right track.

Leaders can also focus on proof-of-concept initiatives or pilots when they begin a new health equity initiative, gradually scaling efforts as they demonstrate clear, measurable benefits. This approach can allow companies to demonstrate value while preserving flexibility and adaptability as external conditions evolve.

Strengthening Data Assets

Pattern: Treating data as a gap to manage rather than an asset to build

Many pharmaceutical companies lack strong data to make equitable decisions. Demographic and socioeconomic data are often incomplete, inconsistently collected, legally sensitive, or if existent, not leveraged by leadership. Clinical, commercial, and community-level data remain fragmented across systems. These gaps hinder prioritization, limit the ability to identify which populations are being underserved, and make it nearly impossible to measure whether health equity efforts are working.

Many pharmaceutical companies lack strong data to make equitable decisions. Demographic and socioeconomic data are often incomplete, inconsistently collected, legally sensitive, or if existent, not leveraged by leadership. Clinical, commercial, and community-level data remain fragmented across systems. These gaps hinder prioritization, limit the ability to identify which populations are being underserved, and make it nearly impossible to measure whether health equity efforts are working.

Rapid advances in AI have added urgency and complexity to this challenge. Companies fall into two camps: those moving quickly to deploy AI tools in isolated pilots without addressing data quality or bias risks, and those hesitating out of concern for regulatory scrutiny or reputational exposure. In both cases, the result is the same: fragmentation persists. When AI systems are layered onto incomplete or unrepresentative data, they risk amplifying existing disparities at scale. When adoption stalls, by fear or ambiguity, organizations forfeit the opportunity to generate insight and strengthen decision-making.

Opportunity: Building data infrastructure as a durable competitive asset

Organizations that invest in robust, representative data collection and data governance will have a significant advantage when regulatory expectations around trial diversity and real-world evidence tighten, when payers demand more granular outcome data, and when the ability to demonstrate population-level impact becomes a market differentiator.

Organizations that invest in robust, representative data collection and data governance will have a significant advantage when regulatory expectations around trial diversity and real-world evidence tighten, when payers demand more granular outcome data, and when the ability to demonstrate population-level impact becomes a market differentiator.

In practice, this means disaggregating data by demographic indicators (race, ethnicity, income) where possible; integrating clinical, real-world, and operational data across functions; using proxy indicators such as geography, access points, and payer mix where direct demographic data is constrained; and incorporating patient-reported outcomes to better capture lived experience. But data is only as valuable as the decisions it informs. Companies that make sustained progress build feedback loops that bring data insights back into strategy, adjusting their approaches based on what the evidence is actually showing about which populations are being reached, where drop-off is happening, and what is working. On the AI side, realizing the potential requires robust governance: transparent model documentation, regular bias audits, and human oversight in high-stakes decisions. The companies that will lead are those that treat data integrity and AI governance not as a legal checkbox, but as foundational to the health equity work itself.

Conclusion

The journey toward health equity in the pharmaceutical industry has reached a critical inflection point. While the initial surge of public commitments following the early 2020s has been met with modern headwinds, the underlying need for equity has only become more urgent. It’s time to evolve, not retreat.

Companies that get these four things right: 1) integrating health equity into the core business, 2) moving beyond access programs, 3) committing to long-term transformation, and 4) building representative data infrastructure, will find that more equitable science and more effective commercial strategies follow. The equity work and the business outcomes become the same work.

Pharma companies that make this shift will not just fulfill a social responsibility. They will build a more resilient, patient-centered business, one where the next chapter of medical innovation is built to reach every patient, everywhere. The goal is to build something that lasts.

[1] Paternina‑Caicedo, A., Espinosa, O., Sheth, S. S., Hupert, N., & Saghafian, S. (2025). Excess mortality rate in Black children since 1950 in the United States: A 70‑year population‑based study of racial inequalities. Annals of Internal Medicine, 178(4), 490–497

[2] West Health & Gallup. (2025). Tracking healthcare affordability and value: The West Health–Gallup healthcare indices report. Gallup, Inc.