This post originally appeared on Social Impact Analysts Association's blog.

There are two exciting forces at play in the world of social impact.

First, social change funders and actors are taking on increasingly complex challenges, requiring tools “beyond the grant” such as advocacy, network and coalition building, collective impact and systems change. These pioneers are catalyzing change where the paths are uncharted and the solutions have not yet been invented – they are pursuing social innovation. This is a powerful trend.

At the same time, social change funders and actors are taking on increased accountability for results. They care about measuring inputs, outcomes and ultimately impact. They want to know if their strategies are working and they want to quantify the exact impact they’ve had. This is also a powerful trend.

However, when these two trends come together (complex problem solving and a thirst for monitoring and evaluation) they often result in a toxic environment that stifles innovation. Why is that?

The reason, as explained in FSG’s new report “Evaluating Social Innovation,”is that social innovation requires a different approach to evaluation.

Imagine the following scenario. You have decided to tackle youth unemployment in your hometown. You know that there are numerous root causes, dozens of actors that need to contribute to the problem solving process and a wide range of external factors that you can’t control, including elections, the economy, the revolving door of business and civil society leaders and the egos of all involved. It is hard to tell what success will look like; maybe getting the right actors to agree to work together systematically is already a big win?

Great news: a foundation is willing to fund your efforts, but with the following stipulations. You need to achieve impact within exactly 24 months. You need to define (“smart”) outcomes now, preferably predicting how many youths each quarter will gain employment based on your efforts. Exactly 12 months in, you need to demonstrate mid-point progress toward those pre-defined outcomes.

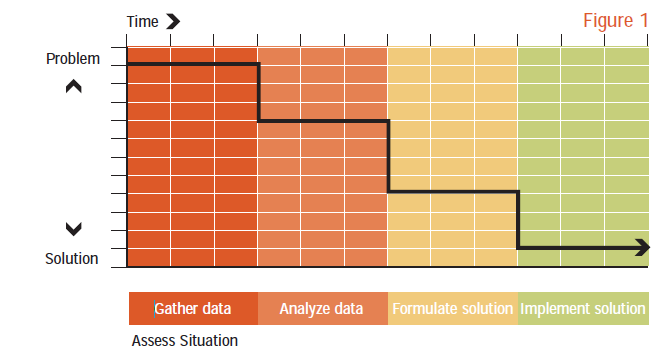

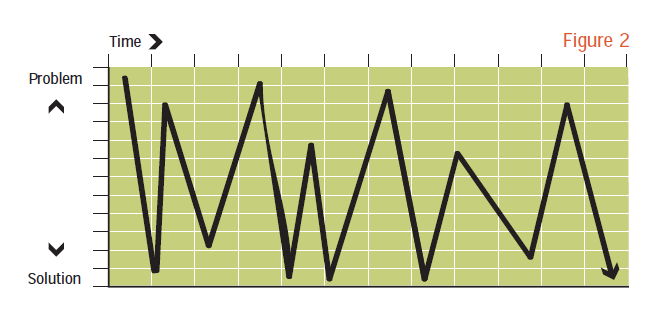

The illustration below, taken from “A Developmental Evaluation Primer” shows this phenomenon graphically.

The foundation wants your efforts to look like this (linear and predictable) and will evaluate you accordingly:

However since in reality you’re going after town-wide systems change (and not developing yet another job skills training center), your efforts will unfold more like this:

The mid-point evaluation of this effort would show that hardly any progress had been made and might conclude that your effort had failed and should not receive further funding.

However, rather than assessing exactly how far the effort is on achieving (pre-maturely defined) outcomes, more interesting inquiry includes:

- Can we discern the critical junctures and factors that have resulted in the spikes and troughs?

- We came pretty close to solving the problem a few times… what happened?

- How has the effort shifted course to accommodate learnings along the way?

- To what extent were the initial hypotheses going into the effort correct?

- What have we learned about the actors and external factors that play an influential role?

- How should the strategy change going forward?

Developmental evaluation, described in more detail in FSG’s new report, is focused on answering these types of questions as the effort unfolds. It does not provide a point in time snapshot of the exact outcomes achieved, but rather, it does justice to the unpredictable and creative ways social innovation unfolds and allows funders and actors to learn, adapt, challenge their assumptions, make decisions and improve their strategies real-time.

If you are funding or implementing solutions that follow the pattern depicted in Figure 2 above, then Developmental Evaluation might just be for you!